Advanced Computing In Pharma & Medtech: How Cognitive Biases Can Cost You Millions

By Elliot Turrini and Ben Locwin

The world economy is experiencing a revolution fueled predominantly by the ability and competitive need to innovate and reduce costs by leveraging ever-more sophisticated computing technologies — particularly artificial intelligence, robotics, augmented/virtual reality, and IoT (Internet of Things). A good example is the automotive industry, where competition is quickly shifting from the technologies comprising the vehicle to the sophisticated computing technologies that will enable autonomous driving. This move from the "widget" to the "use of the widget" is a deeply important paradigm shift.

reduce costs by leveraging ever-more sophisticated computing technologies — particularly artificial intelligence, robotics, augmented/virtual reality, and IoT (Internet of Things). A good example is the automotive industry, where competition is quickly shifting from the technologies comprising the vehicle to the sophisticated computing technologies that will enable autonomous driving. This move from the "widget" to the "use of the widget" is a deeply important paradigm shift.

The life science industry is another example, as three forces are combining to make it vital for these companies to profitably use sophisticated computing technologies:

- Huge profit opportunity from looming healthcare services shortage: Many experts predict a large shortage of physicians and nurses in the United States over the next decade, which provides life science companies the opportunity to use computing technologies to build very profitable medical devices, digital therapeutics (DTs), prescription digital therapeutics (PDTs), and healthcare services. The number of patients who are being treated — in part or in full — by digitized approaches is growing dramatically, and digital therapies are the only way to treat patients of the future, given healthcare's rising resource shortages.

- Substantial competitive pressure: Today's business world is in the middle of a computing technology revolution, which is creating immense pressure to use increasingly sophisticated computing technologies (like AI and IoT) to innovate offerings and operations.

- Healthcare services are primed for disruptive innovation: The late HBS Professor Clayton Christensen in his book Innovator's Prescription explained at great length how the healthcare industry has been slow to adopt new technologies, leaving it vulnerable to (and in need of) disruptive innovation — particularly by harnessing sophisticated computing technologies.

To reap these profits and survive this competition, life science companies must use sophisticated computing technologies to develop and deploy new operational methods and offerings (e.g., precision therapeutics and services). Some companies are already doing this:

- Targeted digital therapeutics to steer disease monitoring, patient compliance, and treatment utility

- Google's AI outperformed radiologists last year in reading lung radiographs for cancerous lesions.

- Microsoft's InnerEye has achieved impressive results in segmenting and visualizing tumors using radiograms and machine learning (ML) algorithms.

- Precision-guided surgery medtech companies are using AI and ML methods to improve outcomes and help guide surgeons during complex surgeries.

- The collection and analysis of real-world patient data and behaviors outside of a care setting is allowing patients to have a more active role in viewing and managing their conditions.

But, in their race to leverage sophisticated computing technologies, life science companies face a hidden, insidious challenge — avoiding the cognitive biases that can cause them to unwittingly exacerbate already business-threatening cyber risks.

Business-Threatening Cyber Risks

A simple but compelling way to understand how cyberattacks pose business-threatening risks is to examine what happened to Merck in 2017-18 when it suffered from the NotPetya cyberattack. Merck has publicly admitted these facts about the attack:

- Before NotPetya, Merck had an expensive cybersecurity program.

- NotPetya inflicted about $1.3 billion in damage on Merck.

- NotPetya crippled Merck's in-house active pharmaceutical ingredient (API) manufacturing and affected its formulation and packaging systems, as well as R&D and other operations.

- The attack had a $260 million impact on sales, a $330 million impact on marketing and administrative expenses and production costs, and a $200 million impact on 2018 sales through residual backlogs.

- It took six months to restore most operations.

- It impeded production of one of Merck's best-selling products even as demand was growing. That forced Merck to borrow $240 million worth of Gardasil doses from the CDC's stockpile due to the "temporary production shutdown resulting from the cyberattack, as well as overall higher demand than originally planned."

- Merck has said that it cost $125 million through a reduction in sales because of its inability to meet demand for Gardasil 9.

Life science companies face the additional financial risk that cyberattacks can cause their offerings — e.g., digital medical devices and prescription digital therapeutics — to malfunction in ways that lead to expensive recalls, regulatory fines, and/or almost unlimited civil liability from lawsuits. Plus, using sophisticated, relatively new computing technologies exacerbates these already business-threatening risks, as illustrated by the "convenience overshoot."

The Convenience Overshoot

The convenience overshoot, according to Jerold Prothero, Ph.D., CEO of Asptrapi, "is a natural (if unfortunate) tendency for new technologies to favor convenience over safety: that is, to focus on the benefits of the technology more than on mitigating its potential adverse effects." The convenience overshoot often creates dangerous delays in safety and security, as illustrated by the following examples:

- The 47-year gap between the introduction of the Ford Model-T in 1909 and the introduction of seat belts as an optional feature in some Ford cars in 1956.

- The roughly 70-year gap between the introduction of the first steam-powered locomotive in 1804 and of a safe braking system, invented by George Westinghouse in the 1870s.

- Similar lengthy time gaps between the introduction of coal power, the pesticide DDT, and CFC coolants and the enactment of legislation to limit their unwanted consequences.

Unfortunately, even without the major pressure from today's computing technology revolution, computing security "is a case of a traditional convenience overshoot, magnified to alarming proportions by the power of our computer tools," according to Prothero. Today's revolution has the potential to substantially increase the convenience overshoot in even more significant ways.

The bottom line is that (1) this computing technology revolution exerts substantial pressure on life science companies to innovate faster by quickly adopting more and more sophisticated computing technologies — particularly AI and IoT — and (2) using sophisticated new computing technologies to innovate can increase the already dangerous convenience overshoot of computing technologies.

Cognitive Biases Can Exacerbate Your Cyber Risks

Cognitive biases are systematic errors in decisions and judgments that occur when people process and analyze information. You can think of them as common, hidden, and harmful decisional and interpretive habits. While many different cognitive biases can exacerbate your already business-threatening cyber risks, the two main culprits are positive illusion and confirmation bias.

Positive illusion refers to unrealistic optimism about the future. The brains of most people are positive illusion factories — for example:

- Only 2% of high school students think their leadership skills are below average. Eighty percent of drivers think they are better than average.

- Twenty-five percent of all people believe they are in the top 1% in their ability to get along with others.

- Ninety-four percent of college professors report doing above-average work.

- People think they are at lower risk than their peers or heart attacks, cancer, and even food-related illnesses.

In business, positive illusion can pose significant problems by preventing decision makers from fully understanding and addressing risk when making decisions.

Confirmation bias refers to the human tendency to seek out information that bolsters (i.e., confirms) existing beliefs. When collecting information, many people select information that supports their preconceptions. Professor Dan Lovallo, a leading expert on the psychology of business decisions, said in the book Decisive: How to Make Better Choices in Life and Work, by Chip Heath and Dan Heath (Crown Business 2013), that confirmation bias "is probably the single biggest problem in business, because even the most sophisticated people get it wrong."

How Positive Illusion And Confirmation Biases Combine To Exacerbate Cyber Risk

Positive illusion can impede accurate decisions even when decision makers have access to all the important risk information. It can cause decision makers to undervalue risks and favor the lure of positive results. Confirmation bias can exacerbate this problem by both (1) leading decision makers to ignore or exclude important risk information and (2) reducing decision makers' receptivity to that risk information even when forced to confront it. Together, these cognitive biases operate like a smoke screen that prevents decision makers from properly accounting for risks that they do not already believe in.

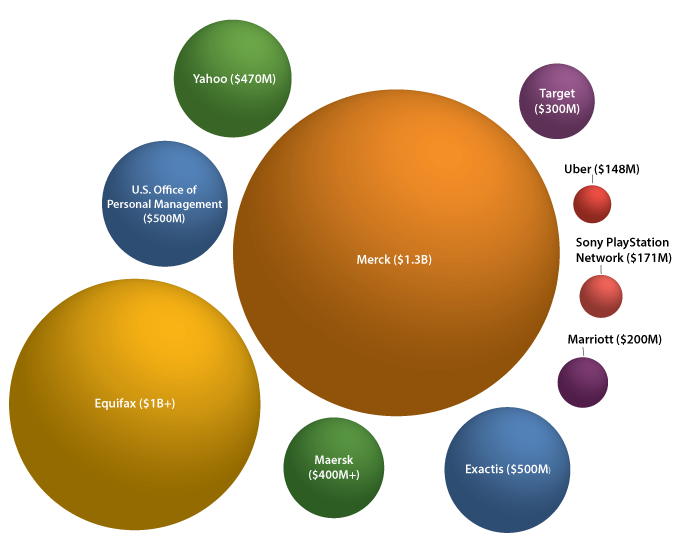

Let's apply these insights to cyber risk, which refers to the risk that cyberattacks will harm a business financially. The dangerous mix of positive illusion and confirmation bias can lead to the irrational belief that a company has properly protected itself from cyberattack. This arises from companies undervaluing cyber risks and mishandling (particularly by overestimating) their mitigation. Every company that suffered million- and even billion-dollar losses from cyberattacks — e.g., Equifax $1B+, Merck $1.3B, U.S. Office of Personal Management $500M, Exactis $500M, Maersk $400M+, Yahoo $470M, Target $300M, Marriott $200M, Sony PlayStation Network $171M, Uber $148M — fell victim to some form of cyber risk positive illusion and confirmation bias.

The Solution — A Better Decision-Making System

The traditional rigorous analytical approach to decision-making does not always adequately mitigate the harm from cognitive biases. See "A Structured Approach to Strategic Decisions" in MIT Sloan Management Review Spring 2019 issue and Decisive: How to Make Better Choices in Life and Work. While this article highlights how positive illusion and confirmation bias can significantly degrade the mitigation of cyber and privacy risks, other cognitive biases, such as availability bias (i.e., giving more weight to information that easily comes to mind), excessive coherence (i.e., constructing coherent stories from deficient evidence), mental mode (i.e., oversimplifying a complex situation), and representative bias (i.e., over-relying on something similar instead of objective evidence), often impede effective decision-making.

We, therefore, suggest that when using sophisticated computing technologies to innovate, life science companies should apply a decision-making system that helps them better mitigate cognitive biases. Other decision support systems include decision theory and advanced analytical models. Our follow-up article will explain how companies can harness decision-making systems like the Mediating Assessment Protocol to cost-effectively mitigate business-threatening cyber risks without unduly interfering with the efficacy of their innovations.

About The Authors:

Elliot Turrini is the CEO of Practical Cyber, a cybersecurity and privacy firm with a medtech specialty. He has been a federal cybercrime prosecutor, a cyber/privacy lawyer, and digital health entrepreneur. He is the co-editor of the book Cybercrimes: A Multidisciplinary Analysis.

Elliot Turrini is the CEO of Practical Cyber, a cybersecurity and privacy firm with a medtech specialty. He has been a federal cybercrime prosecutor, a cyber/privacy lawyer, and digital health entrepreneur. He is the co-editor of the book Cybercrimes: A Multidisciplinary Analysis.

Ben Locwin is a healthcare futurist and medtech executive, working to bring the future of better healthcare technology within reach of all patients in need. Most recently during the pandemic, he has been providing oversight to trials of COVID-19 treatments and top vaccine candidates and participating as a member of several state and federal public health task forces.

Ben Locwin is a healthcare futurist and medtech executive, working to bring the future of better healthcare technology within reach of all patients in need. Most recently during the pandemic, he has been providing oversight to trials of COVID-19 treatments and top vaccine candidates and participating as a member of several state and federal public health task forces.