Solving Problems More Effectively Than Sherlock Holmes: The Contradiction Matrix

By Ben Locwin, Ph.D.

Part 4 of Identifying And Resolving Errors, Defects, And Problems Within Your Organization — a five-part series on operationalizing proper improvement techniques

Part 4 of Identifying And Resolving Errors, Defects, And Problems Within Your Organization — a five-part series on operationalizing proper improvement techniques

Welcome to the fourth article in the series on better investigation and problem-solving methods and principles. In writing this one, I’ve been thinking quite a bit about Sherlock Holmes. Not only his exquisite methods, but also flaws in the metacognition and metaphilosophy about how the fictitious detective underwent his work.

When Sir Arthur Conan Doyle wrote his stories about Holmes, he frequently claimed that his consummate investigator used deductive logic. However, what he actually meant to write (several hundred times) was that Holmes principally focused on inductive and abductive logic, sometimes with a smattering of deduction thrown in. In fact, Holmes was very much and very often NOT a deductive reasoner. So, too, should problem solving in your organization include methods of induction, and this will occur by handling data in a very precise and forensic way.

I’ve solved a larger number of more complex problems than the assiduous resident of 221B Baker Street ever did, and here’s what I’ve distilled and can tell you about the best ways to collect evidence.

Better Data And Information Leads To Better Solutions (On Average)

Published research about having and using data to better forecast the future shows that in virtually every case, the more complete information you have, the better your ability to make decisions about courses of actions. So, collect as much primary data as you can. What do I mean by “primary” data? In the forensics field, we use this term to define those data you’ve personally collected and analyzed. Not pieces of information you’ve gotten from others, or things they’ve begun to assemble — this sets up unknown levels of pre-biasing the data. These latter pieces of data are called, logically, “secondary” data. Secondary data are easier and less expensive to amass; however, they are seldom as useful and accurate as primary data (as measurement biases cannot be estimated).

Inductive reasoning allows you to extrapolate from the data and information observed to arrive at conclusions about chains of events and causality that have not been observed. This is problem solving at its very heart. Extrapolation means there are necessarily probabilities associated with the outcomes of investigations, and so we also seek to minimize uncertainty where possible. This is similar to hypothesis testing and rejection, bringing with it a p-value.

There must be a correlation between pipe smoking and inductive reasoning. If you find one with an associated p-value, please post it in the Comments section below.

It’s Probably Not As Rare As You Think

In medicine we have a precept: “When you hear hoofbeats, think horses, not zebras.” This is to keep your mind focused squarely on probabilities when doing differential diagnoses: Certain clinical presentations can call to mind tremendously rare and horrific diseases, but the probability of those occurring are correspondingly exceedingly rare. This is good and prudent clinical practice wisdom, for the most part, and also takes advantage of Occam’s Razor, which states that “pluralities (complexities) do not multiply without necessity,” or is often taken as “all else being equal, the simplest explanation is most likely the correct one.” (I’ve written more about the consequences of this principle in a previous article.)

So, let’s say you have a leak on the floor and the desktop near a lab instrument. You could try to surmise that a ceiling water pipe developed a leak or rapid, extraordinary condensation, which then precipitated through the ceiling tiles and onto the desk and floor (for which you could observe and try to find evidence of). Or, maybe there was a rapid and spontaneous collection of water molecules in vapor form that coalesced locally above the desk and floor.* These are both zebras, though. As you collect more and more (hopefully primary) data at the scene, you would likely come to find that there was a particular defect with the lab analyzer instrument, which resulted in a leak that then trickled to the floor with the help of gravity. There probably is also a known instrument defect at the manufacturer, because each failure mode for an instrument or piece of equipment has a non-identical probability of occurring.

When you collect pieces of information and use those to attempt to build a narrative for what may have occurred to generate those data (directionality, cyclicity, seasonality, or whatever in the data), you are patently performing inductive reasoning. So, a typical defect, nonconformity, or deviation investigation that includes root cause analysis methods will attempt to compile and collate data from and around the event in order to better establish linkages for what may have occurred.

I’m going to let you in on a little philosophical secret: Most of this is actually a combination of inductive logic with abductive reasoning. Abductive reasoning is also considered “inference to the best explanation.” Well-abduced results can also help formulation of Bayesian probabilities, but may themselves suffer from post hoc, ergo propter hoc logical fallacy (which I also wrote about in the previously mentioned article).

Tea may be a prerequisite for problem solving. And to that end, may I recommend that you read about ”the lady tasting tea” experiment described by Sir Ronald Fisher.1 This will help you refine your problem-solving skills.

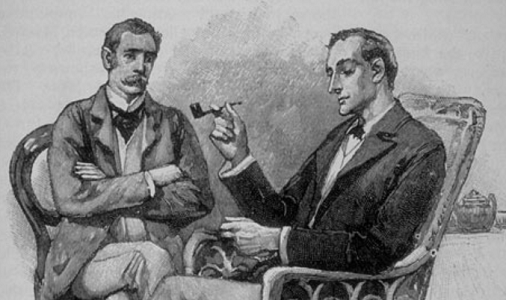

Air France Flight 4590: Concorde, July 25, 2000

The Concorde, Air France 4590 immediately upon takeoff (Note: Flames are incongruous and a contraindicated part of Concorde’s takeoff.)

After: Disaster reconstruction takes place.

On July 25, 2000, Air France Flight 4590, which was a Concorde aircraft, was followed into takeoff by a trail of flames, causing a massive explosion and leading to the exploding plane crashing into the Gonesse hotel near the Charles de Gaulle Airport. One hundred thirteen people died as a result of the explosion and crash. What followed was a rapid and relatively robust investigation, which I’ll cover, as well as years of ongoing litigation and finger-pointing because of legal and financial implications of guilt and the uncertainty of causality with some elements of the event.

Overview Of The Investigation

In just over a month, the investigative team had finalized its draft report and found some interesting pieces of evidence.

After reaching takeoff speed, the tire of the Concorde’s number 2 wheel was cut by a metal wear strip lying on the runway, which had fallen from the thrust reverser cowl door of the number 3 engine of a Continental Airlines DC-10 that had taken off from the same runway five minutes earlier.

Before you consider this an unfortunate one-off event, let me expand a bit on the history of this wear strip that had fallen from the Continental Airlines DC-10:

- This wear strip had been replaced in Tel Aviv, Israel, during what’s called a C maintenance check on June 11, 2000 …

- … and then again in Houston, Texas, on July 9, 2000 (16 days prior to the crash of Air France 4590).

- The strip installed in Houston had been neither manufactured nor installed in accordance with the procedures as defined by the manufacturer.

In the parlance of statistical process control, vis-à-vis Walter Shewhart, we would call this wear strip fiasco above an instance of “special cause.” Additionally, when you’re in an aircraft hurtling down the runway at takeoff or landing speed, one of the critical presuppositions you’d like to make is that the runway is clear of debris.

Are Tire Events Uncommon On Runways That Should Be Clear?

A rapid investigation was mobilized by BEA, the French Bureau of Enquiry and Analysis for Civil Aviation Safety. I have sourced that document for you here and included some data and information from it to expand on the discussion. (Give some thought to whether you would consider this primary or secondary data.)

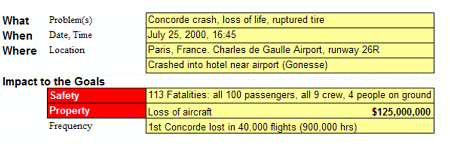

An investigation of events “which had involved tyres or landing gear on the Concorde since its entry into service” was conducted. The information collected to establish the list of events came from the archives of the European Aeronautic Defence and Space Company (EADS, now known as Airbus SE), Air France, British Airways, BEA, the UK Air Accidents Investigation Branch (AAIB), the French Civil Aviation Authority (DGAC), the UK Civil Aviation Authority (CAA), and Dunlop. The report documents:

“In the list, there are fifty-seven [57] cases of tyre bursts/deflations, thirty [30] for the Air France fleet and twenty-seven [27] for British Airways:

- Twelve of these events had structural consequences on the wings and/or the tanks, of which six led to penetration of the tanks.

- Nineteen of the tyre bursts/deflations were caused by foreign objects.

- Twenty-two events occurred during takeoff.”2

In fact, almost 20 years before this event, in November 1981, the American National Transportation Safety Board (NTSB) sent a letter of concern to the French BEA that included safety recommendations for Concorde. This communiqué was the result of the NTSB's investigations of four Air France Concorde incidents during a 20-month period from July 1979 to February 1981. The NTSB described those incidents as "potentially catastrophic," because they included blown tires during takeoff.

During its 27 years in service, Concorde had about 70 tire- or wheel-related incidents, seven of which caused serious damage to the aircraft or were potentially catastrophic. (Author’s note to practitioners: If your processes have this high and repeatable a set of failure modes and rates, fix your processes. Immediately. Your staff, companies, and customers will thank you.)

At any rate, as a result of blown tires and propelled objects striking the fuel tanks and fuselage on the Concorde, the following plot was generated showing the locations of some of them

“which caused structural damage to tanks”:2

By the way, this plot above is actually a concentration diagram, which I’ll discuss in more detail in the next and final article in the series.

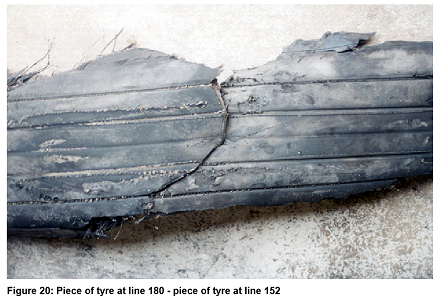

Here is that tire (tire on number 2 wheel) from the ill-fated Concorde:

Conclusions

The BEA report lists a chain of causality paraphrased here: Five minutes before the Concorde departed, a Continental Airlines McDonnell Douglas DC-10-30 took off from the same runway for Newark International Airport and lost a titanium alloy strip that was part of the engine cowl, identified as a wear strip about 435 millimeters (17.1 in) long, ~31 millimeters (1.2 in) wide, and 1.4 millimeters (0.055 in) thick. The Concorde ran over this piece of debris during its take-off run, cutting a tire and sending a large chunk of tire debris (4.5 kilograms, or 9.9 pounds) into the underside of the aircraft's wing at an estimated speed of 140 meters per second (310 mph). It did not directly puncture any of the fuel tanks, but it sent out a pressure shockwave that ruptured the number 5 fuel tank at the weakest point, just above the undercarriage. Leaking fuel gushing out from the bottom of the wing was most likely ignited either by an electric arc in the landing gear bay (debris cutting the landing gear wire) or through contact with hot parts of the engine.

I mentioned assignment of blame and litigation earlier, and here’s some of the follow-up story: In March 2008, Bernard Farret, a deputy prosecutor near Paris, asked judges to bring manslaughter charges against Continental Airlines and two of its employees, the mechanic who replaced the wear strip on the DC-10 (John Taylor) and the mechanic’s manager (Stanley Ford). The prosecutor alleged negligence in the repair activities. Continental Airlines denied the charges and suggested an alternative theory: That the Concorde "was already on fire when its wheels hit the titanium strip, and that around 20 first-hand witnesses had confirmed that the plane seemed to be on fire immediately after it began its take-off roll."3

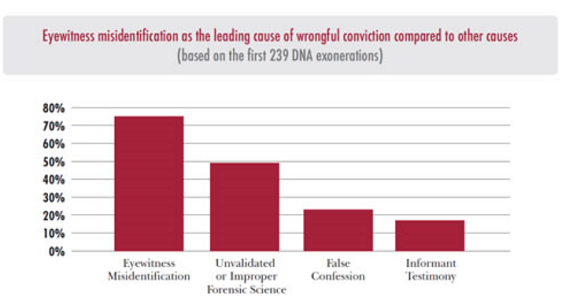

A Note On The Value And Validity Of Eyewitness Testimony For Collecting Evidence

Though the last sentence above, regarding the witnesses at the scene, may have been of great relief to Continental Airlines, in many criminal investigations, the value of eyewitness testimony asymptotically approaches zero. In fact, I’ve worked with the American Psychological Association and forensic investigations that involved discussions on the veracity of eyewitness feedback. Data collected for research on the forensic value of eyewitness testimony suggests that it’s faulty to a degree of about half. Basically, treat eyewitness testimony as no better than a coin flip. Unfortunately, juries are swayed to a degree approaching unity when listening to eyewitnesses.4

She’s pointing at someone who she thinks is the suspect. But she’s probably incorrect.

I like Neil deGrasse Tyson’s observation here: “No matter what eyewitness testimony is in the court of law, it is the lowest form of evidence in the court of science.”

Thinking About The Level Of Detail And Complexity Needed

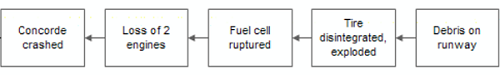

Below is a simple cause map detailing (or rather, anti-detailing) the Flight 4590 event. Notably, it doesn’t include relevant and necessary details to develop an inductive hypothesis.

What were the antecedents to “Debris on runway”? “Fuel cell ruptured”? These turn out to be some of the most critical elements of the investigative chain. The debris on the runway preceding Flight 4590 was left by the DC-10 whose questionable part and repair history was a known source of defects and nonconformities. It’s literally criminal to omit that from the chain of causality, since we know it to be a part. When you’re doing an investigation or risk analysis, always ask: What happened? To what extent?

The Contradiction Matrix

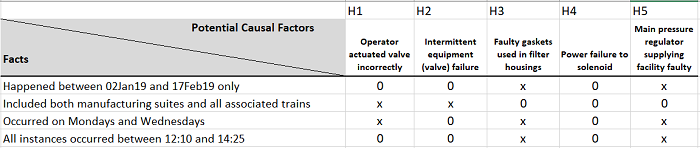

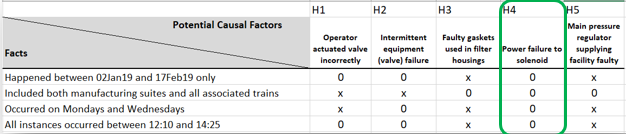

The contradiction matrix is something that naturally derives out of TRIZ (the Russian acronym for Teoriya Resheniya Izobretatelskikh Zadatch, or the Theory of Inventive Problem Solving), but we’re going to use it a bit differently, and looks like this:

In this example, the group determined the problem statement began with "pressure surge caused breakthrough of membrane filtration and leak by of ball valves." So, we put what is known about the event (what we consider as the facts) down the y-axis. Then we put the hypotheses across the top of the x-axis. I’ve further labeled these for clarity as ”H1 through H5,” short for “‘Hypothesisn”.

As we read down the y-axis with each hypothesis, if the fact supports the hypothesis, I populate it with an 0 and if it doesn’t agree with the fact, it gets an x. Alternatively, you can use ü and û.

Interestingly, what this exercise correctly pointed out was that operator error (mis-specification and setting of a series of valve positions) wasn’t the problem. Instead, there was an issue with the backup power supply, and a main solenoid supplying both manufacturing areas was intermittently impacted (see highlighted hypothesis below). This gave a sporadicity and seemingly (potentially) non-random pattern to the events.

What this tool helps with is challenging simultaneous and competing hypotheses in situations where testing or experimentation of all of them would be prohibitively expensive or time-consuming. You can reduce five, 10, or perhaps 15+ competing hypotheses down to a critical few using observed facts of the event. If you still have two or three to choose from, you can more reasonably test them to isolate the most likely root cause(s).

Operationalizing The Contradiction Matrix

To create your own contradiction matrix, I would suggest starting with a large blank wall space or big sheet of flipchart paper stuck on the wall. Then create your x-y grid, where the left-side y-axis represents a vertical listing of the known facts of the event. Across the top x-axis, you begin to list the inductive hypotheses you’d like to test “against” your facts for veracity. If you somewhere have blanks or question marks instead of x and 0, you may need to collect more data for that particular hypothesis.

For your facts, populate your contradiction matrix thusly: For each element that is known about the event or occurred around the spatial location and/or time of the event, document it. Then, for the successive hypotheses (H1-Hn), list them with sufficient detail that your problem-solving team knows how to respond to fill in the matrix.

The matrix is a clearing house for all the relevant or potentially relevant information to be screened as part of your investigative processes. Don’t be reductionist in populating your data; put in everything you can and let the matrix do its work by showing all the known linkages, possible linkages, potentially unrelated linkages, spurious correlations, and red herrings.** Sherlock Holmes himself noted: "It is a capital mistake to theorize before you have all the evidence. It biases the judgment."

The logic underpinning the Contradiction Matrix, as stated by Sherlock Holmes in The Sign of the Four: “When you have eliminated the impossible, whatever remains, however improbable, must be the truth.”

Solving problems and sipping tea: If you’re (correctly) brainstorming with other group members, make sure they’re less comatose and a bit more focused than Watson in the above picture.

Endnotes:

*This is a reformulation of the classic entropy discussion that it is exceedingly rare, though possible, for all of the air molecules in the room to spontaneously be in one corner, suffocating the residents of that room. Thankfully, with the number of gas molecules in a room, the answer is approximately zero.

**For those non-readers of detective literature, the “red herring” was an Agatha Christie favorite. The derivation of the phrase was likely from some consecutive uses found between the 1690s and 1780s, where red herring was a term used to refer to a false prize at the end of arduous service or as a dog-training scent (also used by escaping convicts to throw-off the scent-tracking dogs). Every investigation has erroneous pathways leading from it which could rightly be called red herrings. Look for and eliminate these.

References and Important Further Reading:

- Fisher, R.A. (1935). “Lady Tasting Tea” in Design of Experiments.

- BEA. (2000). Accident on 25 July 2000 at La Patte d’Oie in Gonesse (95) to the Concorde registered F-BTSC operated by Air France. https://www.bea.aero/docspa/2000/f-sc000725a/pdf/f-sc000725a.pdf

- Cosgrove, M. (2010). “Concorde crash remains unresolved 10 years later.” Digital Journal. http://www.digitaljournal.com/article/295099

- On eyewitness testimony: Stambor, Z. (2006). How reliable is eyewitness testimony? Monitor. https://www.apa.org/monitor/apr06/eyewitness

About The Author:

Ben Locwin, PhD, MBA, MS, MBB, is a medical device and pharma executive and a member of several advisory boards and boards of directors across the industry and was the former president of a healthcare consulting organization. He is a world-renowned process improvement theorist and practitioner, and science communicator and popularizer. He created many of the frameworks for risk management and advanced process improvement currently in use across many industries and has worked across the drug life cycle from early phase to commercial manufacturing and marketing (GLP, GCP, GVP, GMP). He frequently keynotes events and conferences on these current topics. Connect with him on LinkedIn and/or Twitter.

Ben Locwin, PhD, MBA, MS, MBB, is a medical device and pharma executive and a member of several advisory boards and boards of directors across the industry and was the former president of a healthcare consulting organization. He is a world-renowned process improvement theorist and practitioner, and science communicator and popularizer. He created many of the frameworks for risk management and advanced process improvement currently in use across many industries and has worked across the drug life cycle from early phase to commercial manufacturing and marketing (GLP, GCP, GVP, GMP). He frequently keynotes events and conferences on these current topics. Connect with him on LinkedIn and/or Twitter.